Enterprise LLM Strategy FAQs | Large Language Model Implementation Explained

Introduction

Enterprise investment in large language model technology has crossed a threshold in 2026 that demands a more deliberate strategic approach. The question facing senior leaders is no longer whether to deploy LLMs, but how to build the organizational and technical infrastructure that allows these models to deliver reliable, measurable business value at scale. This guide demystifies what an LLM strategy for enterprise actually entails, covering what it is, how it works, what it costs, and the decisions that separate organizations generating real returns from those still stuck in perpetual pilot mode.

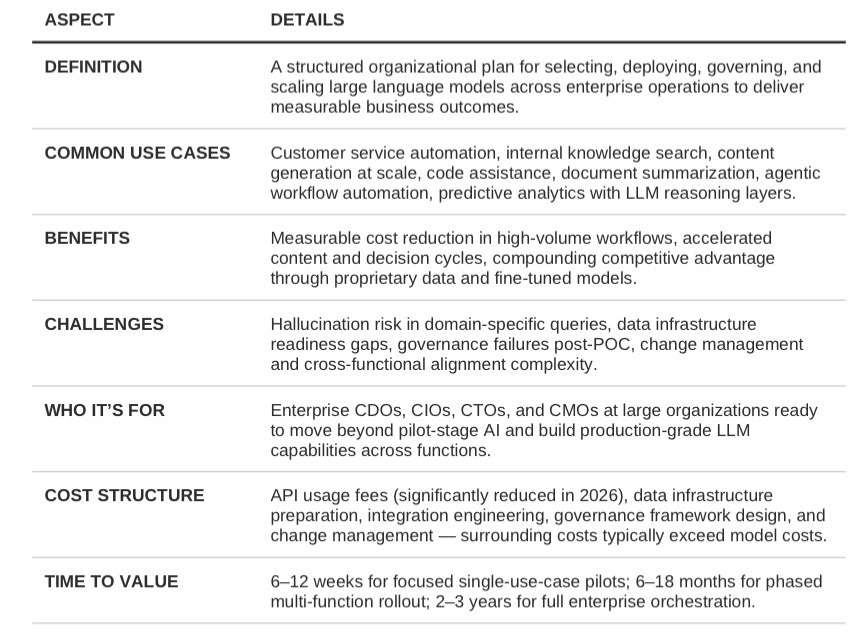

This article is written for enterprise CDOs, CIOs, CTOs, and CMOs who are past the awareness stage and now evaluating how to move from experimentation to production-grade LLM deployment. By the end, the reader will have a clear framework for scoping an LLM strategy, understanding the key implementation variables, and identifying the type of partner capable of supporting enterprise-scale execution.

Market Context: Disruption & Opportunity

The enterprise LLM landscape has undergone a structural shift that most organizations are still catching up to. What was a research-adjacent conversation in 2022 is now a capital allocation decision at the executive level. Enterprise AI spending reached $37 billion in 2025 and is accelerating, yet only 13% of enterprises report seeing organization-wide impact from their AI investments, despite over 80% having deployed generative AI APIs or applications. The gap between adoption and impact is the defining challenge of 2026.

The underlying cause of this gap is strategic, not technical. Most enterprises approached LLM adoption as a series of isolated pilots, individual use cases with narrow scope, limited integration, and no governing framework for how models interact with data, workflows, or governance standards. In 2026, the organizations pulling ahead are those that have shifted from treating LLMs as a toolset to treating them as infrastructure: a persistent capability that sits across the enterprise and compounds in value over time. For enterprise brands that have not yet made this shift, the cost of delay is increasingly measurable in lost competitive position, not just missed efficiency gains.

Let’s kickstart the conversation and design stuff people will love.

FAQs Snapshot

The following questions represent the most common and strategically important queries enterprise leaders raise when evaluating LLM strategy and implementation.

What is an LLM strategy for enterprise?

An LLM strategy for enterprise is a structured plan that governs how an organization selects, deploys, integrates, and scales large language models across its operations. It encompasses model selection, data architecture, governance and compliance frameworks, integration with existing enterprise systems, change management, and a phased implementation roadmap tied to measurable business outcomes. Unlike an individual AI project, an LLM strategy is designed to create a sustainable, organization-wide capability, one that evolves as model capabilities advance and business needs change. Without a deliberate strategy, most enterprise LLM initiatives remain fragmented, difficult to govern, and disconnected from the revenue and efficiency outcomes that justify investment.

What is a large language model and how does it differ from traditional AI?

A large language model is an AI system trained on vast datasets of human-generated text to understand, generate, and reason with language at a level that approximates human fluency. Unlike traditional rule-based AI or narrow machine learning models, which are trained to perform a specific task on structured data, LLMs are general-purpose reasoning systems capable of handling open-ended queries, generating content, summarizing documents, writing code, and engaging in multi-step problem solving. The enterprise implications are significant: LLMs can operate across functions (marketing, operations, legal, customer service) without being retrained for each use case, making them far more scalable than prior generations of enterprise AI.

Why does an enterprise need an LLM strategy rather than just deploying AI tools?

Deploying LLM tools without a governing strategy produces the outcome most enterprises are already experiencing: high adoption, low impact. Individual tools create data silos, inconsistent outputs, compliance gaps, and difficulty measuring ROI. An LLM strategy ensures that deployments are connected to business objectives, that data flows are governed and secure, that outputs meet quality and compliance standards, and that the organization builds cumulative capability rather than a collection of disconnected experiments. The enterprises reporting the strongest returns from LLM investment in 2026 are universally those that treated implementation as a platform decision rather than a project decision.

What are the most valuable enterprise LLM use cases in 2026?

Customer service and support automation has emerged as the clearest near-term ROI use case, capturing over 30% of enterprise LLM revenue due to measurable cost reduction and satisfaction improvement. Knowledge management and internal search, enabling employees to query internal documentation, policies, and institutional knowledge through natural language, consistently delivers fast time-to-value. Content generation and personalization at scale is a strong use case for marketing-led organizations managing high-volume content workflows. More advanced use cases, agentic workflow automation, predictive analytics with LLM reasoning layers, and autonomous multi-step task completion, are gaining traction among early adopters and represent the next wave of enterprise value. The right use case prioritization depends on an organization's data maturity, integration readiness, and risk tolerance.

What is the difference between a general-purpose LLM and a domain-specific LLM?

General-purpose LLMs, such as GPT-4, Claude, and Gemini, are trained on broad datasets and capable of handling a wide range of tasks without fine-tuning. They currently represent approximately 54% of enterprise LLM deployments by revenue due to their versatility and accessibility via cloud API. Domain-specific LLMs are trained or fine-tuned on industry-specific data, clinical records, legal documents, financial filings, to achieve higher accuracy and lower hallucination rates for specialized queries. Domain-specific models are growing at over 38% CAGR as enterprises mature past general-purpose pilots and begin optimizing for specific workflows where accuracy and compliance are non-negotiable. A well-constructed LLM strategy typically involves a hybrid model: general-purpose LLMs for broad operational tasks, domain-specific or fine-tuned models for high-stakes or regulated processes.

What is RAG and why does it matter for enterprise LLM implementation?

Retrieval-Augmented Generation (RAG) is an architectural pattern that connects an LLM to an external knowledge base, allowing the model to retrieve relevant documents or data at inference time rather than relying solely on its training data. For enterprise deployments, RAG is a critical design choice: it enables models to surface accurate, up-to-date information from internal systems without requiring continuous retraining, while keeping sensitive data out of the model's training scope. RAG has become the standard approach for enterprise knowledge management applications, internal chatbots, and any use case where factual accuracy and data recency are required. It also significantly reduces hallucination risk for domain-specific queries, which is one of the primary barriers to enterprise LLM adoption in regulated industries.

How long does enterprise LLM implementation take?

Implementation timelines vary significantly based on organizational readiness and scope. A focused pilot targeting a single high-value use case, such as a customer service copilot or internal knowledge search, can be delivered in six to twelve weeks with the right integration infrastructure in place. A phased enterprise rollout covering multiple functions, legacy system integration, and custom model fine-tuning typically spans six to eighteen months from initial assessment to production at scale. Full enterprise orchestration, where LLM capabilities are embedded across business units, governed by a central AI platform, and continuously evaluated against business outcomes, is a two-to-three year program. Organizations that attempt to skip the phased approach and deploy at full enterprise scope in a single initiative consistently encounter governance failures, integration bottlenecks, and adoption resistance.

What does enterprise LLM implementation cost?

Cost structures vary considerably depending on deployment model, model selection, and integration complexity. API-based deployments using general-purpose models carry lower upfront infrastructure costs but ongoing usage fees that scale with volume, LLM API costs have declined approximately 80% year-over-year, with output tokens that cost $150 per million in early 2025 now priced at $25–30. Custom or self-hosted deployments involve higher infrastructure investment but provide greater data control and long-term cost predictability, making them preferable for regulated industries or high-volume workflows. Beyond model costs, the primary cost drivers are data infrastructure preparation, system integration, governance framework design, and change management. Organizations that underestimate these surrounding costs, which often exceed model costs by a factor of three to five, are the most likely to experience implementation failure.

What are the biggest risks in enterprise LLM deployment?

Hallucination remains the most operationally significant risk, the tendency of LLMs to generate plausible but factually incorrect outputs. Global business losses from AI hallucinations reached $67 billion in 2024, and hallucination rates for domain-specific queries can reach 69–88% for legal questions and 15.6% for medical queries without proper guardrails and RAG architecture. Data privacy and regulatory compliance are the second major risk category, particularly for organizations operating under GDPR, HIPAA, or financial services regulations. Governance failures, deploying LLMs without clear ownership, audit trails, output monitoring, or escalation protocols, are the most common cause of enterprise AI initiatives being discontinued after proof of concept. Approximately 30% of GenAI projects are discontinued post-POC, primarily due to inadequate risk controls and unclear business value.

How does an enterprise choose the right LLM model?

Model selection should be driven by use case requirements, not brand recognition or benchmark scores. The criteria that matter for enterprise selection are accuracy for the specific task domain, latency and throughput at the required scale, compliance with applicable data residency and regulatory requirements, total cost of ownership at projected usage volumes, and vendor stability and support infrastructure. In 2026, the LLM market features over a dozen frontier models competing across a one-thousand-times price range, making cost-performance optimization a strategic lever rather than a secondary consideration. Organizations that default to the largest available model for all use cases consistently overpay, a hybrid strategy deploying general-purpose large models for complex reasoning and smaller specialized models for high-volume operational tasks is the current best practice.

What internal capabilities does an enterprise need to execute an LLM strategy?

Successful LLM strategy execution requires cross-functional alignment across IT, security, legal, and business operations, not just an AI team working in isolation. The specific capabilities required include data engineering to prepare and govern the data foundations that LLMs depend on, ML operations (MLOps) to monitor model performance and manage deployment pipelines, prompt engineering and evaluation expertise to ensure output quality, and change management capability to drive adoption across business units. Many enterprises find that the limiting factor is not access to models but the maturity of their data infrastructure and their ability to integrate LLM outputs into existing workflows in ways that employees will actually use. This is where a strategic implementation partner with cross-functional enterprise experience generates the most value.

Can LLM capabilities scale with enterprise growth?

Scalability is one of the primary arguments for investing in a structured LLM strategy rather than point solutions. Cloud-based LLM deployments, which account for approximately 62% of enterprise implementations, are inherently elastic and can scale with usage demand without proportional infrastructure investment. The architectural patterns that support scale, RAG, modular agent design, standardized integration layers, are also the patterns that support governance and quality control as deployment expands. The organizations that struggle to scale are those that built early pilots on ad hoc architectures without standardization. An LLM strategy designed with scalability as a first-order requirement, not an afterthought, avoids the costly re-architecture that most enterprises eventually face when trying to move from departmental deployment to enterprise-wide adoption.

Benefits of Enterprise LLM Strategy & Implementation

A well-executed LLM strategy for enterprise delivers compounding value across multiple dimensions of business performance. Organizations that move from isolated pilots to a governed, integrated enterprise LLM capability report measurable improvements in operational throughput, content quality, and decision speed. Specifically: customer service organizations achieve significant cost reduction through automation of high-volume, routine interactions while routing complex cases to human agents more efficiently; marketing and content teams reduce time-to-market for personalized communications by orders of magnitude; knowledge-intensive functions including legal, compliance, and finance gain the ability to synthesize large document volumes and surface relevant precedents in seconds rather than hours. The most durable benefit, however, is organizational learning, enterprises that build LLM capability as a platform accumulate proprietary data, fine-tuned models, and workflow integrations that become increasingly difficult for competitors to replicate, creating a compounding advantage that grows over time rather than depreciating.

Quick Summary Table

Deep-Dive Sections

What an LLM Strategy Is and Why It Matters

An enterprise LLM strategy is a governance and execution framework that answers four fundamental questions: which models will be deployed and for which purposes; how those models will connect to enterprise data and systems; who owns output quality, compliance, and continuous improvement; and how LLM capabilities will evolve alongside advancing model technology and changing business requirements. It matters because without this framework, enterprises consistently produce the same failure pattern, high adoption of AI tools, low evidence of business impact, and growing governance risk as usage scales beyond what informal oversight can manage. The shift that defines the most successful enterprise AI programs in 2026 is treating LLM capability as infrastructure, a persistent, centrally governed platform, rather than a portfolio of individual projects. This shift requires executive sponsorship, cross-functional ownership, and a willingness to invest in the data foundations and integration architecture that determine whether LLM outputs are trustworthy enough to act on at scale.

How Enterprise LLM Implementation Works

Enterprise LLM implementation follows a phased architecture. The first phase is foundation: assessing data readiness, identifying high-value use cases against an effort-impact matrix, selecting deployment architecture (cloud API, hybrid, or self-hosted), and establishing governance standards including output monitoring, escalation protocols, and audit trail requirements. The second phase is pilot deployment: building a production-grade implementation for one or two priority use cases, integrating with relevant enterprise systems via API or middleware, establishing evaluation pipelines to measure output quality and business impact, and gathering organizational learning before scaling. The third phase is enterprise rollout: standardizing integration patterns across business units, building the internal MLOps capability to monitor and iterate on deployed models, and establishing the feedback loops that allow models to improve continuously from real enterprise usage. The architectural patterns that underpin scalable enterprise LLM implementation, RAG for knowledge grounding, agent frameworks for multi-step task automation, and centralized LLM gateway infrastructure for governance and cost management, are now well-established and supported by mature tooling.

When to Build an LLM Strategy (and When Not To)

An enterprise LLM strategy is the right investment when an organization has identified specific, high-value use cases with clear ROI hypotheses, has the data infrastructure to support model integration, and has executive alignment on treating AI as a strategic platform rather than a technology experiment. It is the wrong investment when the organization is pursuing AI capability primarily for competitive signaling rather than operational need, when data quality and governance foundations are immature, or when there is no clear ownership model for AI output quality and compliance. The failure to articulate 'what problem this solves' before investing in LLM strategy is the primary driver of the 30% post-POC discontinuation rate. Use case clarity is not a prerequisite for starting the strategy conversation,it is the output of a well-run discovery and assessment process, but organizations that skip discovery and move directly to implementation consistently encounter avoidable failure.

Tools and Platforms Involved

Enterprise LLM strategy and implementation involves a layered technology stack. At the model layer, leading general-purpose models include GPT-4 and GPT-5, Claude, Gemini, and Llama 4 for organizations pursuing self-hosted deployments. At the integration layer, retrieval-augmented generation frameworks, vector databases for semantic search, and enterprise middleware platforms connect models to existing data sources and workflows. At the governance layer, LLM gateway infrastructure manages routing, caching, cost control, and policy enforcement across model usage, reducing costs by 50–80% through caching repeated queries while maintaining centralized oversight. At the orchestration layer, agent frameworks including LangChain, LangGraph, and vendor-native agent platforms such as Salesforce Agentforce enable multi-step autonomous workflows. Platform selection should be driven by existing enterprise architecture, compliance requirements, and the specific integration demands of priority use cases, not by vendor marketing or technology novelty.

Scalability and Flexibility

The enterprise LLM market is projected to grow from $5.91 billion in 2026 to $48.25 billion by 2034, reflecting the sustained enterprise demand for scalable AI infrastructure. The architectural decisions made during initial LLM strategy design have long-term implications for an organization's ability to scale without re-architecture. Enterprises that build on standardized integration patterns, modular agent designs, and centralized governance infrastructure can expand LLM capability to new use cases and business units without rebuilding from scratch. Conversely, organizations that build early pilots on ad hoc architectures, custom integrations, ungoverned model access, inconsistent evaluation practices, consistently face costly re-architecture as they try to scale. Flexibility is also a function of model selection: an enterprise LLM strategy that creates vendor lock-in with a single model provider sacrifices the ability to adopt better-performing or more cost-effective models as the market continues to evolve rapidly.

Common Misconceptions About Enterprise LLM Strategy

The most persistent misconception is that LLM strategy is primarily a technology decision. In practice, the organizations reporting the strongest enterprise LLM outcomes consistently identify change management, cross-functional governance, and data quality as the determining factor, not model selection or technical architecture. The second misconception is that larger models are always better. In 2026, the operational reality is that smaller, domain-focused language models outperform large general-purpose models for specific, high-volume enterprise tasks at a fraction of the cost, and the enterprises deploying hybrid model strategies are achieving better ROI than those defaulting to the largest available model for all use cases. The third misconception is that hallucination is an inherent, unsolvable problem. While hallucination risk is real and significant, it is a manageable engineering problem, through RAG architecture, output validation pipelines, and domain-specific fine-tuning, rather than a fundamental barrier to enterprise deployment.

How G&C. Can Help

G&C. works with enterprise brands at the intersection of strategy, technology, and experience design to build LLM capabilities that deliver measurable business outcomes, not proof-of-concept demonstrations. Through our LLM Strategy & Implementation practice, we support organizations from initial use case discovery and data readiness assessment through architecture design, integration engineering, and the governance frameworks required to operate LLM systems responsibly at scale. Our cross-functional model means that LLM strategy is designed in context with the customer experience, commerce, and brand objectives it is intended to serve — not as a standalone technology project disconnected from business outcomes. We bring real-world implementation experience across the enterprise AI stack, an understanding of what failure looks like before it becomes expensive, and the organizational change management expertise to ensure that LLM capabilities are actually adopted by the people and teams they are built for.

G&CO. is a minority business enterprise (MBE), as certified by the National Minority Supplier Development Council (NMSDC). If diversity inclusion is part of your supplier process, contact us, we may be a great fit for your enterprise.

Conclusion & Next Steps

Enterprise LLM strategy in 2026 is no longer a forward-looking investment — it is a present competitive reality. The organizations already compounding returns are those that moved from pilot to platform eighteen to twenty-four months ago; those still in perpetual experimentation mode are watching the gap widen. The path from where most enterprises currently sit — high adoption, low organizational impact — to where the leaders are operating is not primarily a technology problem. It is a strategy, governance, and integration problem, and it is solvable with the right framework and the right partner.

The next step is not a technology evaluation. It is a use case and readiness assessment: identifying the two or three workflows where LLM capability would generate the clearest, most defensible business impact, and honestly evaluating the data infrastructure and organizational readiness required to deliver it. That assessment is the foundation of a strategy worth investing in.

At G&CO., we've worked alongside enterprise clients to implement similar shifts, whether through digital strategy, AI integration, or platform modernization. Our expertise enables brands to translate AI capability into tangible competitive advantage. Still have questions? Reach out and let's solve them together.

%20(1).png)